Customer satisfaction is key to running a successful business. That’s obvious to most everyone. What is less obvious is exactly how you should go about determining whether or not your customers are, in fact, satisfied. If you’ve been struggling with trying to measure this, customer satisfaction (CSAT) surveys may be just the ticket.

Why CSAT surveys are important

We’ve gone into more detail about CSAT before, but, in short, CSAT or Customer Satisfaction Score measures how satisfied your customers are with a business, feature, or interaction.

Your CSAT score matters because good customer support helps build customer loyalty. And CSAT surveys are tools that you can use to both measure your customer satisfaction score and solicit feedback from them about products, features or services.

How to write good CSAT survey questions

Working with good data is key when it comes to CSAT, so it’s important to ask the right questions. Here’s a list of some things to consider when formulating your CSAT survey:

- Keep it short: The best kind of surveys are ones that don’t take a lot of time and effort to answer, so keep things short and sweet! People are much more likely to respond when you do, and you want as many responses as possible to get an accurate result.

- Keep it targeted: In much the same vein, make sure that your CSAT survey is targeted so you actually collect data that is actionable. Now is not the time for general questions — direct your users’ attention to one particular product, service or feature.

- Think about delivery: Most surveys are sent out via email, and the response rate of those is dismal. It helps to meet users where they actually are, rather than sending out emails en masse. The best way to do this? Send a quick survey in-app, preferably immediately after a user has logged in or used a feature for the first time.

- Experiment with form: If you’re sending out surveys that are set up exclusively for text-based responses, you’re not exploring all the avenues available to you. So experiment with the form of these questions and answers. Consider asking users for an emoji rating or to score something on a numerical scale instead.

Types of grading scales

As we just mentioned, experimenting with the form of questions and the rating scales you use can help inspire people to respond. You just need to be clear and make it easy for them to. The rating scales typically used to score CSAT questions are:

- Likert scale

- Binary scales

- Multiple choice

- Open-ended

Likert scale questions

This type of rating scale provides users with a list of options ranging from one extreme to the other and often (but not always) includes a neutral response. So, for example, scales that look like this:

How satisfied were you with the product?

- Very Satisfied

- Somewhat satisfied

- Neither satisfied nor dissatisfied

- Somewhat dissatisfied

- Very dissatisfied

fall under the category of Likert scales.

Binary scales

Pretty self-explanatory, these scales give you a binary choice to pick between. An example of a scale like this would be:

Were you satisfied with the product?

- Yes

- No

Scales like these leave no room for interpretation or nuance, but they do make it easier to arrive at a conclusion about something if you want that conclusion to be very definite. For example, if more people say they hate a feature than love it, you know it’s probably time to pull that feature.

Multiple choice questions

These give you a way to find out a little bit more information about your users. They look a little something like this:

Which of the following features do you use the most:

- Option A

- Option B

- Option C

Given that you’re the one providing the answers, their options are definitely constrained, but questions like this can still be helpful in providing deeper insight into your customers' wants, needs and preferences.

Open-ended questions

Want to give your customers a chance to tell you how they feel about your product in their own words? Ask them some open-ended questions! Here’s what that looks like:

How was your experience using our product?

Questions like these give customers a way to elaborate and give you more information in the process, but they also take a lot longer to answer and require more effort, so response rates are bound to be lower. They also aren’t very easy to evaluate or score, so are probably better used sparingly to reach out to specific customers about specific interactions.

CSAT question examples

With regards to the specific categories of questions you can ask users, there are several:

- Usage

- Product

- Demographics

- Psychographic

- Satisfaction

Usage question examples

Want to know how often your customers are using your products? Ask them questions like:

- How often do you use (insert product name)?

- How often do you use (insert feature name)?

- Would (insert change) cause you to use this product more or less frequently?

- Which of the following features do you use the most frequently?

You can then either provide a Likert scale, binary or text field for their response.

Product question examples

These questions can give you great insight into exactly how your customers are using your product, what they like and, perhaps most importantly, what they want you to fix. This sort of insight is invaluable when it comes to designing and amending your product roadmap. Here are some examples of these kinds of questions:

- What problem does (insert feature or product) help you to solve?

- Are you enjoying (insert functionality)?

- How do you feel about (insert product)?

- Which of the following features do you find the most useful?

- How much value for money would you say our product provides?

- Do you have any feature requests?

- Has (insert feature or product) made (insert problem) easier to solve?

- Has (insert feature or product) made your (insert industry) workflow easier to manage?

You can form these as Likert, Binary or open-ended questions, depending on the question.

Demographic question examples

If you’re interested in the broad strokes of your user base, it’s helpful to ask them questions about themselves. That’s where demographics comes in. Demographic questions can be helpful in understanding which segments you are serving well and which you are undeserving. Some examples include:

- What gender do you identify as?

- What is your educational level?

- What industry do you work in?

- What is your job title?

- What is your employment status?

- What is your income?

- What is your relationship status?

- Where do you live?

- What is your zip code?

- What is your ethnic background?

Psychographic question examples

These delve deeper into a persona than demographic questions, answering the why rather than the what. They are about a person’s motivations, wants, desires, preferences, self-image, etc. Some examples include:

- Are you a member of a religious denomination?

- Are you a member of a political organization?

- How do you feel about (insert issue)?

- What do you prioritize when (insert activity)?

- Do you prefer (insert option) or (insert other option)?

- How much time do you spend on (insert activity)?

- How do you see yourself when it comes to (insert trait)?

- What beliefs would motivate you to (insert activity)?

- How long do you spend on (insert activity) every week?

- Where do you get your news?

- Which is your favorite social media platform?

Level of satisfaction questions

The bread and butter of any good CSAT survey, these questions measure how your customers actually feel about your product and how satisfied they are with it. Some examples include:

- How was your experience with (insert feature or service) today?

- Are you satisfied with (insert feature or service)?

- How would you rate your (insert recent interaction) with us?

- Are you enjoying (insert feature or product)?

- Were you satisfied with your onboarding experience?

- How would you rate your recent customer support experience?

How should you distribute your survey?

There are lots of tools that you can use, from dedicated software to simple solutions like Google Forms.

Some pointers to keep in mind:

- If users are prompted to submit a survey on a mobile app, it makes sense that it is timed to them having just used the app, for example.

- If you are sending out a link they can open on any device, make sure to test the experience and responsiveness first.

- If you are looking to collect your answers in an Excel or Google Sheet, there are lots of tools that connect to those.

- If you want to first write the survey in Word or Google Docs, you can do that too, but make sure to always look at the final UX of the survey you send out.

Example CSAT surveys

If you're still a little unsure about what your CSAT survey should look like, we've got you covered with three examples:

- A really short CSAT survey asking users to rate a support experience

- A medium-sized survey asking them to rate their satisfaction of a newly released feature

- A long CSAT survey asking them for a more comprehensive view of your company

The short CSAT survey

Surveys like this one are best delivered in-app, right after a user has had a support experience or a ticket has been closed. They typically use Likert Scales and look something like this:

How satisfied were you with your support experience today?

- Very satisfied

- Somewhat satisfied

- Neutral

- Somewhat dissatisfied

- Very dissatisfied

Because these are short and targeted, you can generally expect response rates to be high without having to offer any sort of incentive.

The medium-length CSAT survey

You can send surveys like this out when you launch a new feature or product and want to understand how satisfied someone was with it. You can send these in-app, but emails with a link to the survey are far more common since these do tend to run a little long. Here's a little example we prepared for you:

Were you satisfied with using (name of new feature) today?

- Yes

- No

Please elaborate.

- (Text box)

Do you agree with the statement: '(Feature name) helps me solve (problem)'?

- Strongly Agree

- Agree

- Don't Know

- Disagree

- Strongly Disagree

The long CSAT survey

Surveys like this one are intended as a way to give you a more complete understanding about how someone feels about your company and its products. Because it includes a lot more questions than the surveys above, you should expect response rates to drop and may even have to offer incentives (like in-app credits or gift cards) to entice people to respond.

Netflix has a great example of a survey like this, which we've recreated below:

How satisfied are you with Feature A?

- Very Satisfied

- Somewhat Satisfied

- Neutral

- Somewhat Dissatisfied

- Very Dissatisfied

You indicated you were 'Somewhat Dissatisfied'. Please elaborate.

- (Text box)

How satisfied are you with feature B?

- Very Satisfied

- Somewhat Satisfied

- Neutral

- Somewhat Dissatisfied

- Very Dissatisfied

You indicated you were 'Somewhat Satisfied'. Please elaborate.

- (Text box)

How satisfied are you with your overall experiences with our customer success and support team?

- Very satisfied

- Somewhat satisfied

- Neutral

- Somewhat dissatisfied

- Very dissatisfied

Would you use our platform again?

- Yes

- No

You indicated that you would use our platform again! We'd love to know why.

- (Text box)

What to do after sending out CSAT surveys

So you’ve sent out your CSAT surveys and compiled the responses. Here’s what’s next:

- Calculate your CSAT score

- Compare your score with industry benchmarks to see where you land

- Do more research to identify areas of improvement

- Follow up with your customers consistently

- Continue to measure your CSAT score and improve it

Calculate CSAT

Firstly, you’ll need to calculate your CSAT score to figure out where you stand. We have an article about how to calculate CSAT, so make sure you read that.

Compare with industry benchmarks

Once you’ve calculated your CSAT score, you’ll want to compare it to industry CSAT benchmarks to see how you stack up.

Do more research to identify the issues

Once you’ve evaluated how well – or poorly — you’re doing, you’ll need to do a deep dive into the responses and identify which customers to follow up with for more information.

A great tool to utilize here is something called a session replays tool, which allows you to watch video-like recordings of users using your product to understand what isn't working and which users you need to follow up with. Fullview Session Replays is one such tool. Use it in conjunction with Fullview Console Logs to see what problems and errors users encountered during their session so you know exactly what feedback to solicit. Fullview Session Replays also automatically detect and warn you when a user shows signs of frustration — like rage clicking — in your app.

Follow up to increase customer satisfaction

If you’re feeling down about the fact that your CSAT scores are not quite up to standard and notice that many people have responded negatively, don’t lose hope yet! Reaching out to customers can still go a long way towards ensuring they don’t abandon your product.

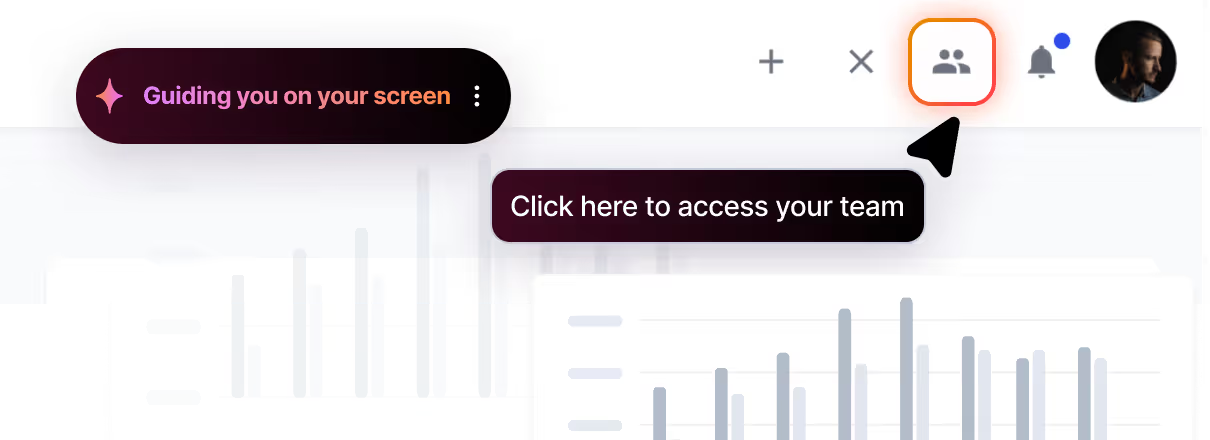

A great way to reach out to them is via cobrowsing, which is a much more immediate solution than sending out an email and hoping to receive a reply. Once you notice the user you want to speak to is online in your product, you can use a tool like Fullview Cobrowsing to call them in-app at the touch of a button.

Fullview Cobrowsing allows you to speak to your customers straight from within your product, so you don’t have to send out meeting invites or Zoom links. You can also cobrowse and control their screen right along with them to get them smoothly past sticking points or troubleshoot easily by looking at console information in the Fullview Console sidebar, available right on the call.

You can also use this as an opportunity to ask them for more detailed feedback and take notes on product and/or service improvements they suggest.

Continue to measure and improve

CSAT isn’t a one-and-done sort of thing. It’s a metric that you will have to measure on a continual basis to see exactly how it evolves over time. You’ll need to keep a close eye on these trends to make sure that you are still meeting customer expectations and still creating memorable experiences for them that result in satisfied, loyal customers.

Wrapping things up

Measuring your customer satisfaction score is essential for any support team. CSAT surveys are a useful tool to measure customer satisfaction and solicit feedback from customers. To write effective CSAT survey questions, keep them short and targeted, meet users where they are, and experiment with different forms of questions and answer options. After sending out CSAT surveys, calculate your CSAT score, evaluate the responses, follow up with customers, and continue measuring and improving CSAT over time to meet customer expectations.

.png)

.webp)